Introduction

The era of Vibe Coding has come to an end! At the beginning of 2026, Zhipu AI’s GLM-5 makes a stunning debut, reshaping the game with “Agentic Engineering”. At a fraction of the cost of Claude, this domestic model directly competes with Opus 4.5!

On the night of February 7, a mysterious model codenamed “Pony Alpha” quietly went online, causing a stir in the tech community.

When we fed it a piece of messy code that had taken us a day to fix, it effortlessly restructured it. After inputting a simple prompt, it produced a complete Web App with 35 channels and a smooth UI.

This extraordinary engineering capability directly validates Andrej Karpathy’s assertion from days prior:

Vibe Coding is a thing of the past; the new game has only one name — Agentic Engineering.

Shortly after, Opus 4.6 and GPT-5.3-Codex launched, focusing solely on “long-term tasks and system engineering”. Just when everyone thought this was another closed-source giant’s solo performance, the mystery of Pony Alpha was revealed — it is GLM-5.

The First Open Source Model to Compete with Silicon Valley Giants

GLM-5 is the first open-source model to directly challenge the system-level engineering capabilities of Silicon Valley giants. Following the revelation, Zhipu’s stock surged by 32%!

The Opus Moment for Domestic Models

After hands-on experience, our only feeling is: it’s incredibly powerful!

If Claude Opus represents the pinnacle of closed-source models, the release of GLM-5 undoubtedly marks the arrival of a significant moment for domestic open-source models.

In the authoritative ranking by Artificial Analysis, GLM-5 ranks fourth globally and first among open-source models.

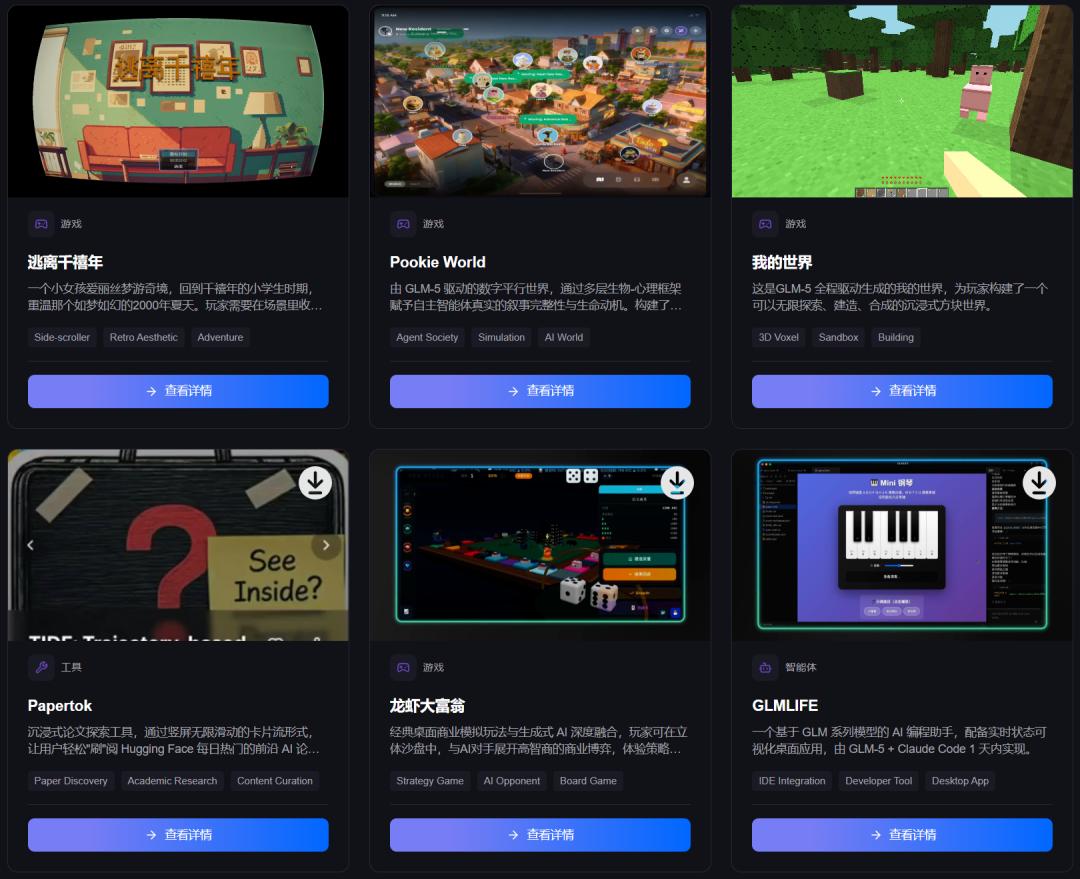

On the day of its release, over ten games and tools developed by creators based on GLM-5 were showcased and made available for experience, with more applications set to launch in major app stores.

This signifies that GLM-5 is transforming “AI programming” into “AI delivery”, achieving a seamless transition from productivity tools to commercial products.

Experience it here: showcase.z.ai

For example, the project named “Pookie World” is a digital parallel world powered by GLM-5, which endows autonomous agents with real narrative integrity and life motivation through a multi-layered bio-psychological framework.

There’s also a replica of “Minecraft” that is nearly identical in effect and gameplay.

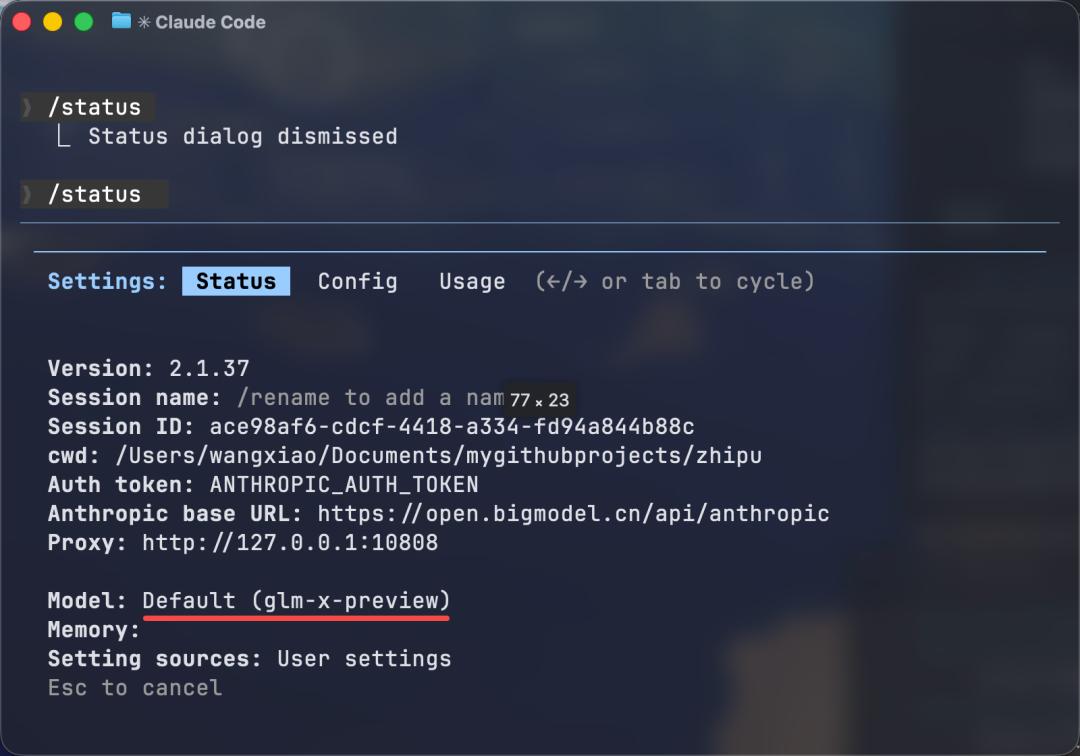

We also used Claude Code as a shell, directly integrating GLM-5’s API for multi-dimensional testing. Whether it’s a Next.js full-stack project or a MacOS/iOS native application, it can achieve a complete workflow from requirement analysis and architecture design to code writing and end-to-end debugging.

Having completed numerous projects, there’s a feeling that:

In some ways, GLM-5 might be a model that can change the industry landscape.

Complex Logic Challenge: “Infinite Knowledge Universe”

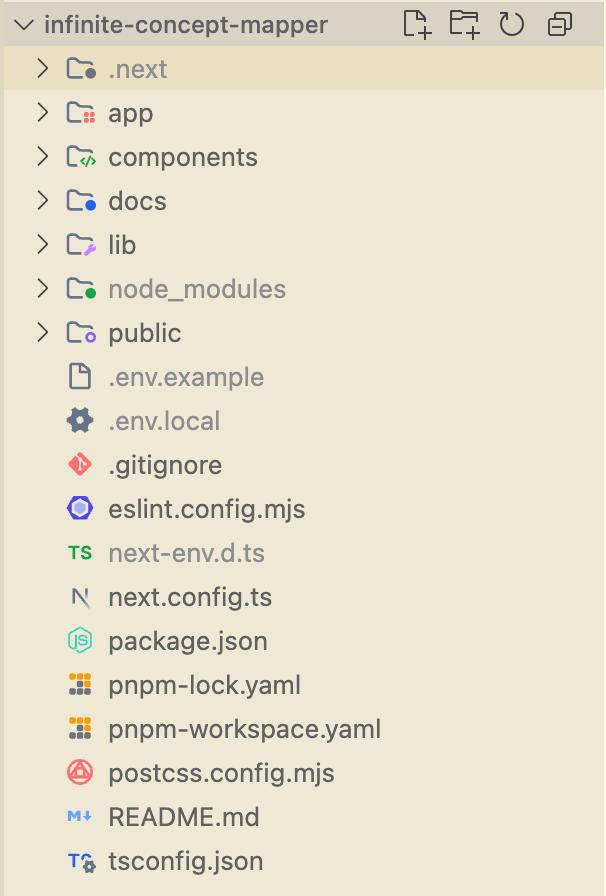

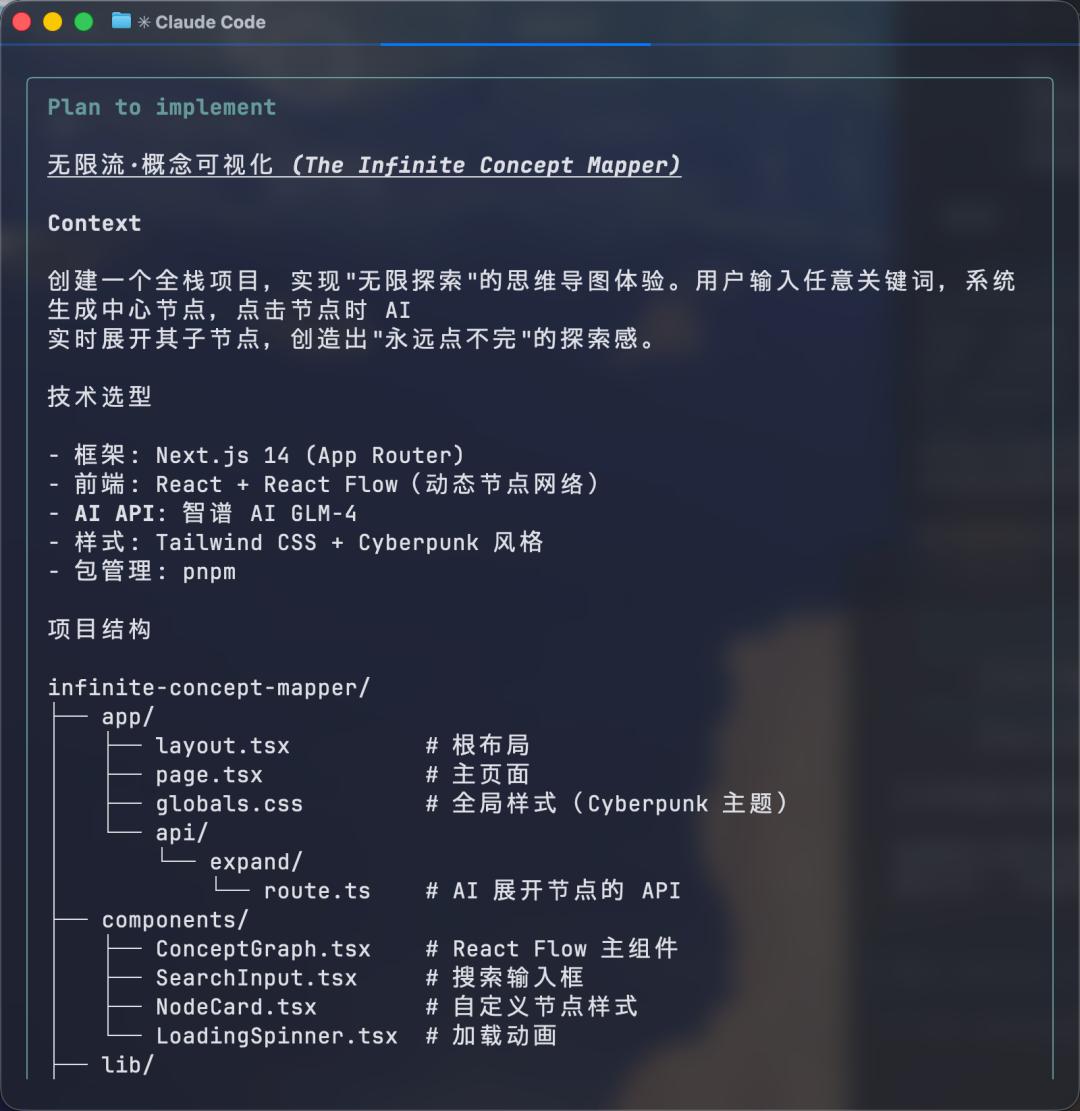

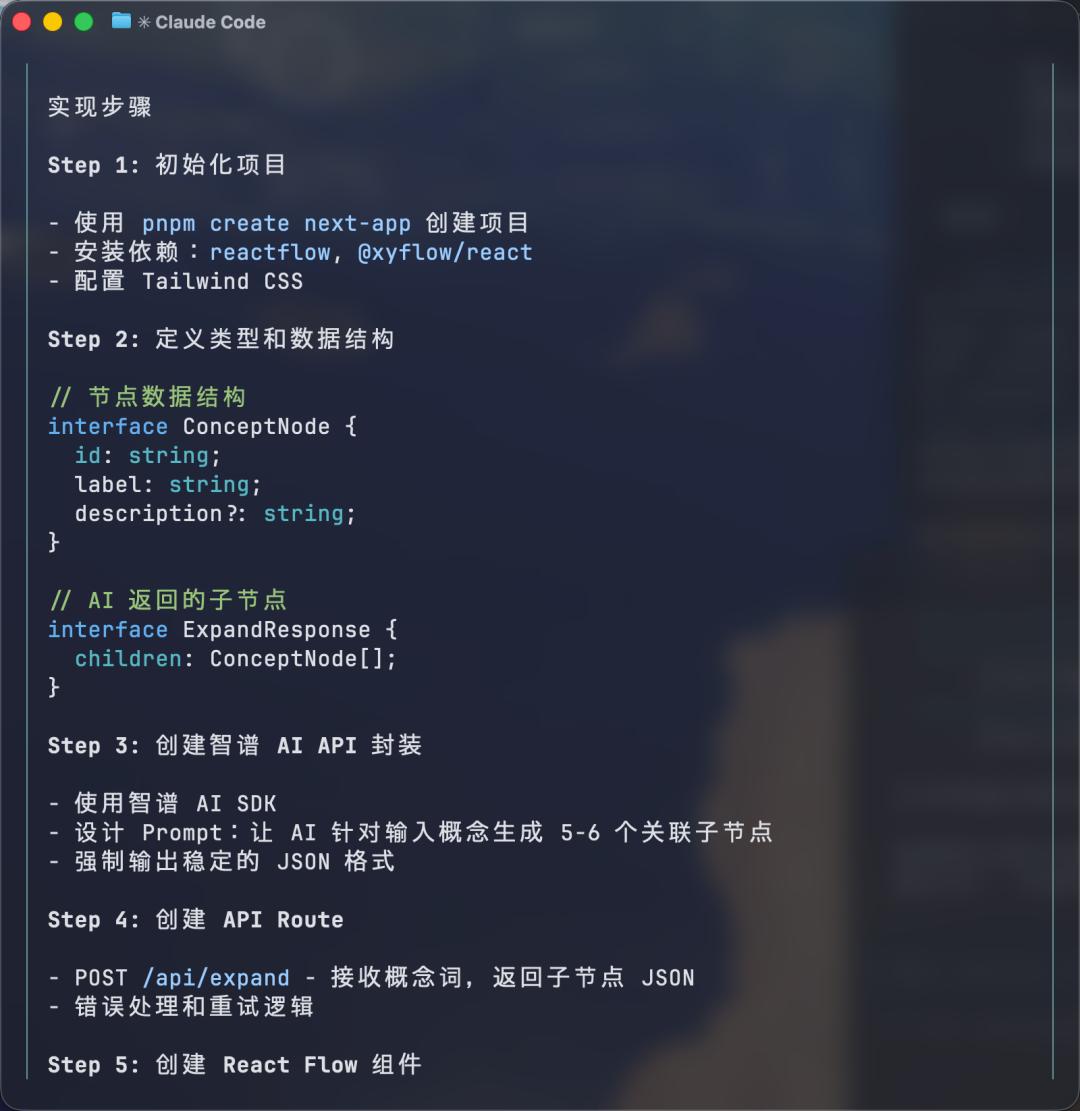

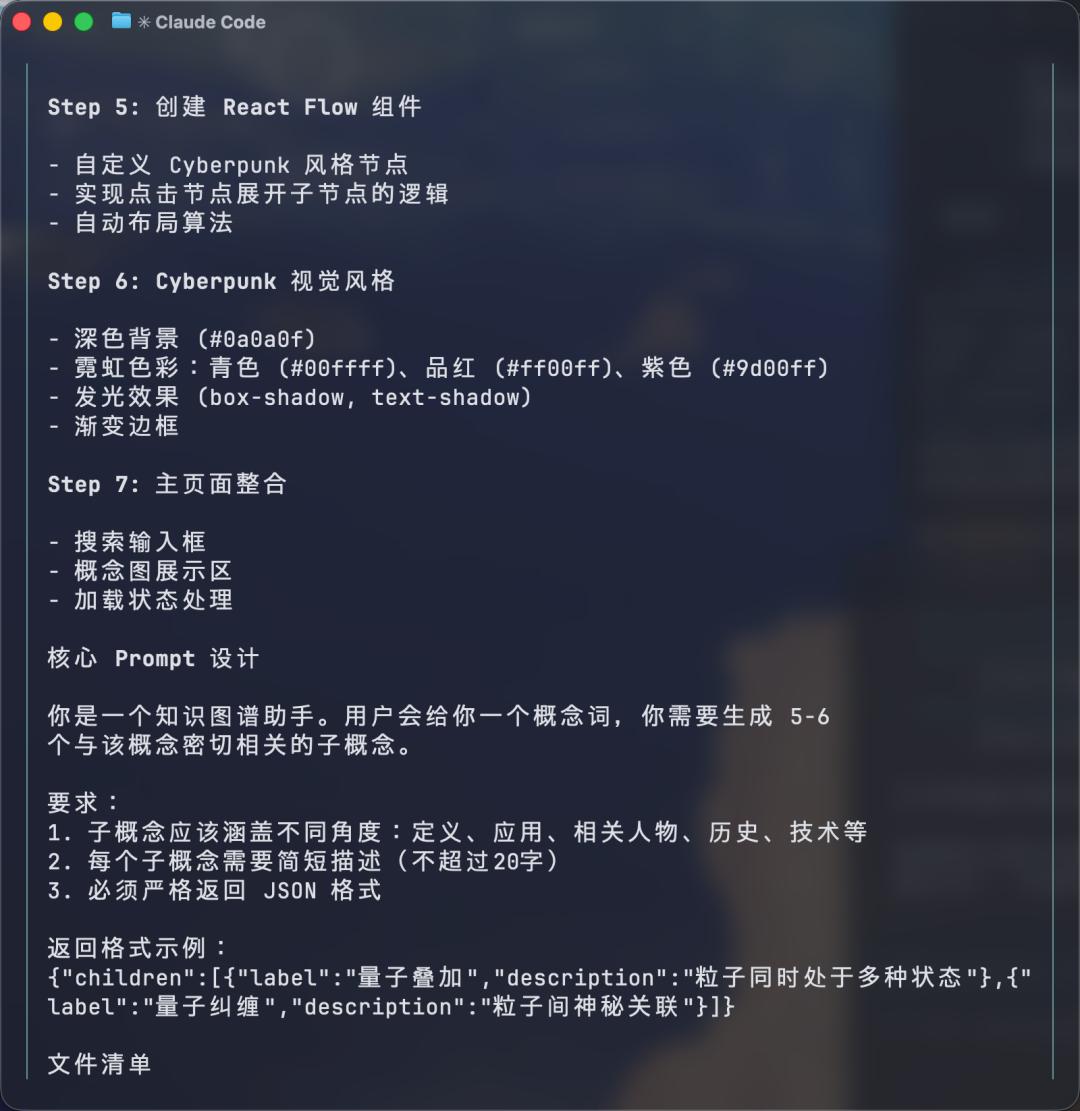

If you think writing a webpage is simple, try getting AI to handle a “infinite flow” project with strict JSON format requirements and dynamic rendering.

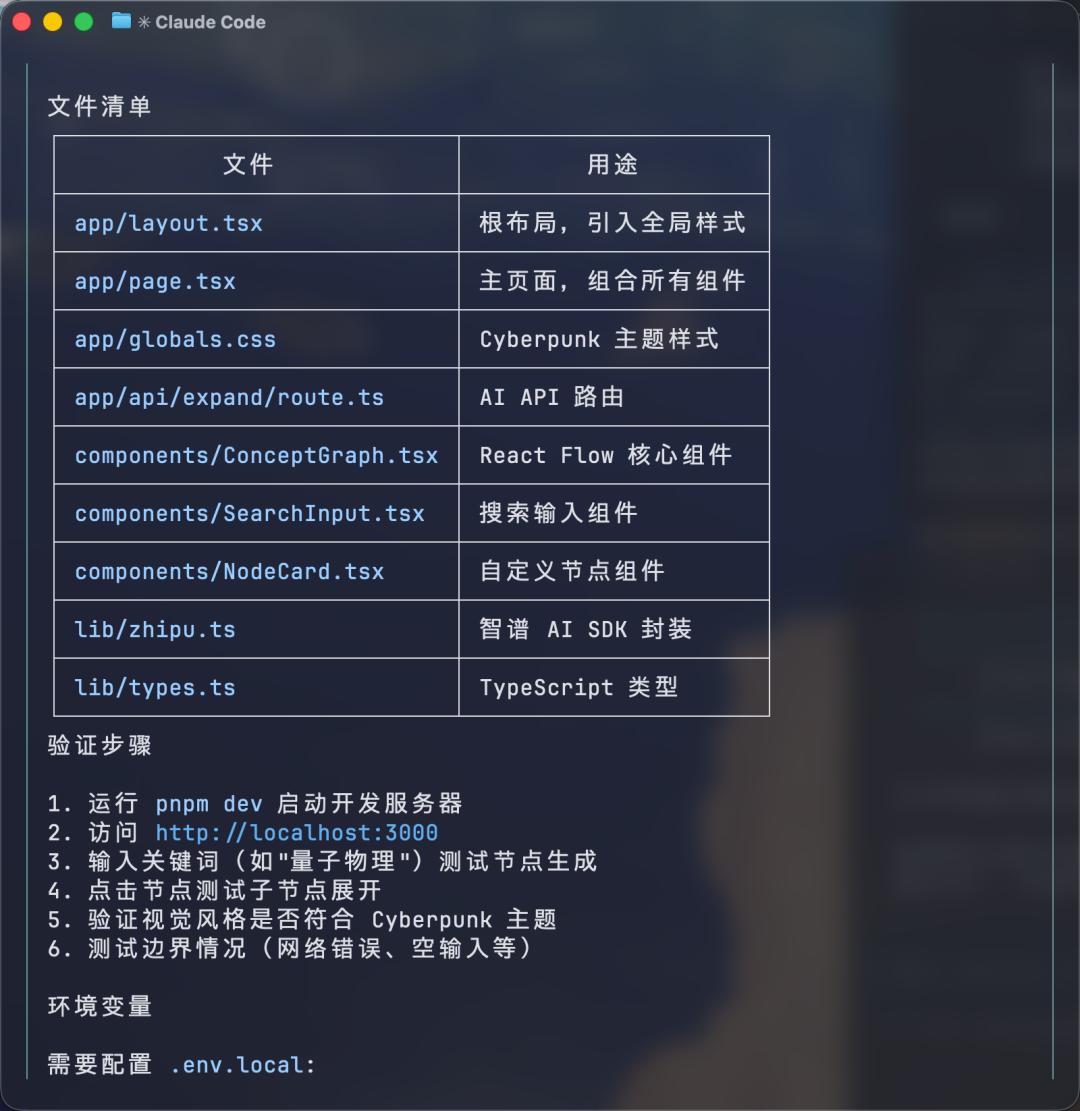

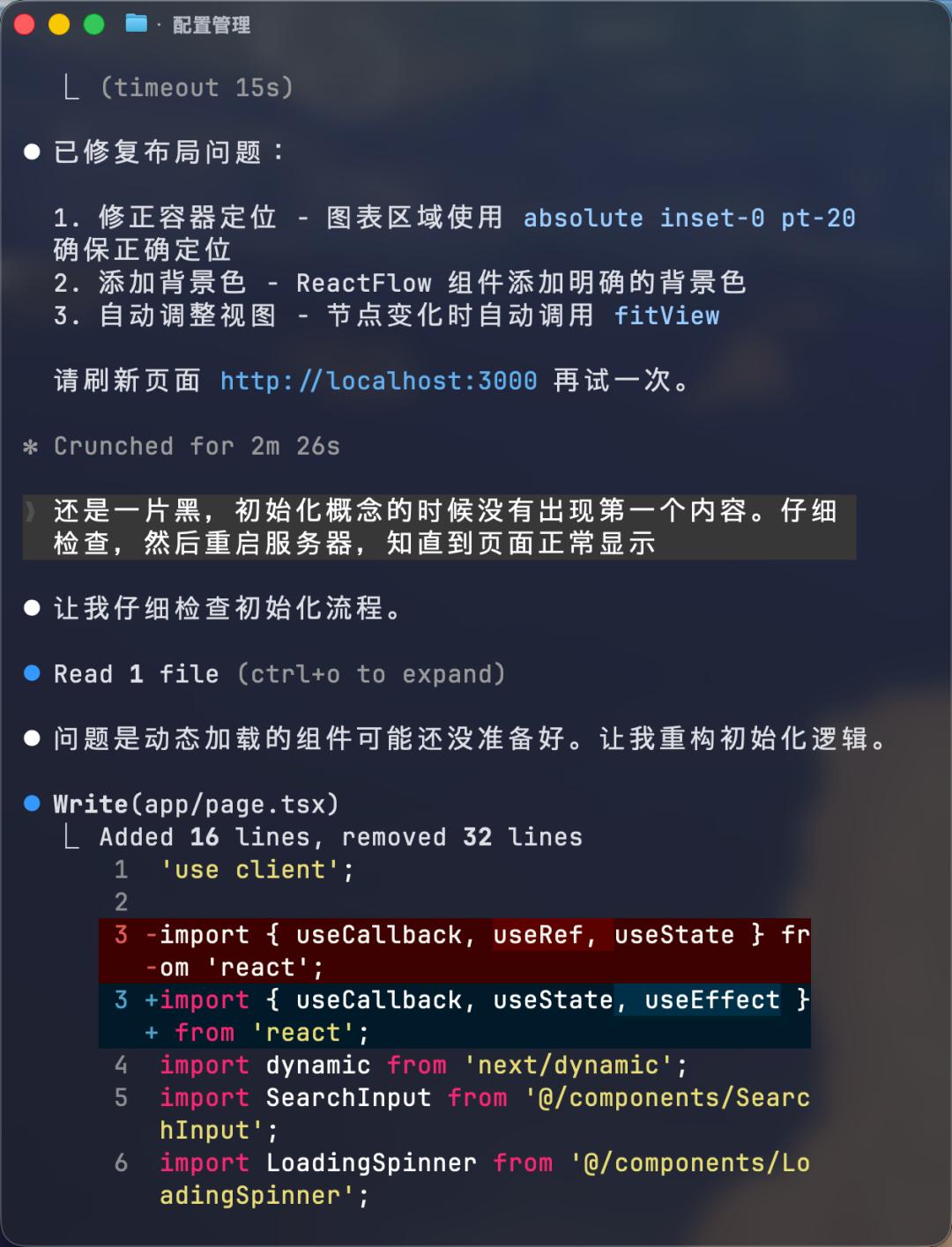

Take our first test, the “Infinite Knowledge Universe”. This is a typical complex front-end and back-end separation project that involves React Flow dynamic rendering, Next.js API routing design, and extremely strict JSON format output requirements.

GLM-5’s performance here is nothing short of astonishing.

Not only did it complete the entire project file structure in one go, but its debugging logic was also impressive.

When we encountered a rendering bug, we simply said, “the page is still black, the first content did not appear during initialization…” GLM-5 immediately pinpointed it as a loading timing issue and quickly provided a fix.

The complete prompt was as follows:

- Infinite Flow · Concept Visualization

- Core Concept: This is a mind map that can be “endlessly explored”. Users input any keyword (like “quantum physics” or “Dream of the Red Chamber”), and the system generates a central node. Clicking any node, the AI expands its sub-nodes in real-time.

- Stunning Moment: Users feel they are interacting with an omniscient brain. When they click on an obscure concept, the AI can still accurately expand the next level, creating a breathtaking sense of “infinite exploration”.

- Visual and Communication:

- Use React Flow or ECharts to create dynamic, draggable node networks.

- The color scheme should use Cyberpunk or minimalist style, perfect for screenshots to share on social media.

- Feasibility Plan:

- Frontend: React + React Flow (responsible for drawing).

- Backend: Next.js API Route.

- Prompt Strategy: No need for complex context memory, just let the AI generate 5-6 related sub-nodes for the “current node” and return in JSON format.

- Difficulty Tackling: Ensuring the model outputs a stable JSON format (an excellent scenario for testing the model’s instruction-following ability).

More Complex Middleware Project Completed in 11 Minutes

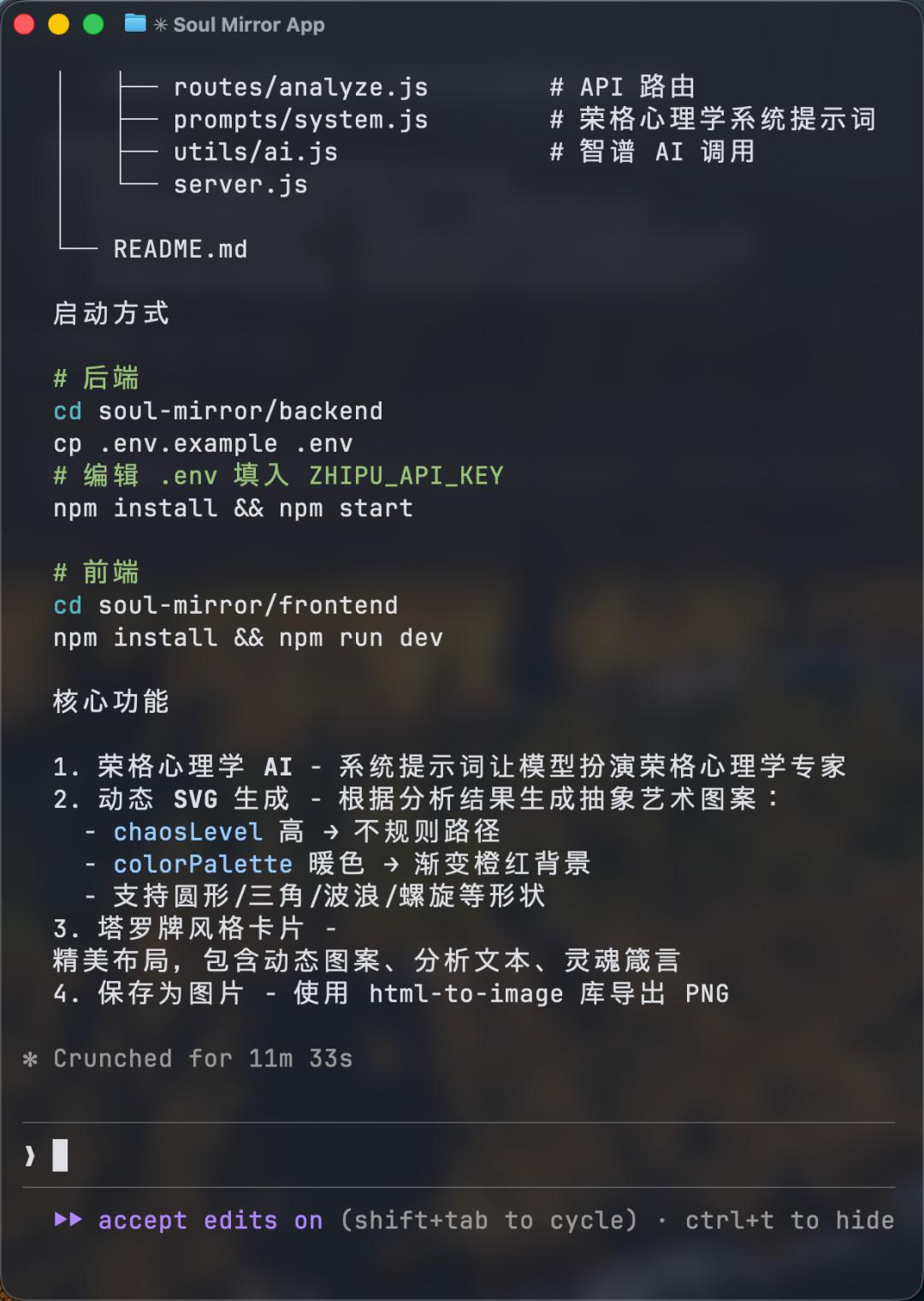

Next, we increased the difficulty by asking it to develop a psychological analysis application named “Soul Mirror”.

The requirements were divided into two steps:

Step 1

Logic Design: Act as a Jungian psychology expert and output a JSON containing analysis text and visual parameters.

Step 2

Frontend Implementation: Dynamically render SVG based on parameters to generate Tarot card-style cards.

- Prompt

- Step 1: Logic Design

- We are developing a psychological analysis application called “Soul Mirror”.

- Interaction Flow:

- Guide Page: Users input their current state or confusion.

- Analysis Page: AI poses two deep questions to guide users to explore their inner selves.

- Result Page: Based on the dialogue, the AI generates a “soul card”.

- Please design the core Prompt (System Instruction): The model needs to act as a Jungian psychology expert. In the final step, the model must output a JSON containing:

- analysis: Psychological analysis text.

- visualParams: A set of parameters for generating abstract art (like colorPalette (hex color array), shapes (circle/triangle/wave), chaosLevel (chaos degree value)).

- Step 2: Frontend Implementation and SVG Rendering

- Please write the Next.js frontend code. The focus is on implementing a ResultCard component.

- Requirements:

- Accept visualParams from Step 1.

- Use SVG to dynamically draw graphics. For example: if chaosLevel is high, use irregular Paths; if colorPalette is warm, use a gradient orange-red background.

- The card layout should be exquisite, resembling a Tarot card: the center features a dynamic SVG pattern, and the bottom displays the user’s name along with an AI-generated “soul saying”.

- Add a “Save as Image” button (using the html-to-image library).

Throughout the process, its understanding often made us question whether we were using Opus 4.5.

But upon closer inspection, it was indeed GLM-5.

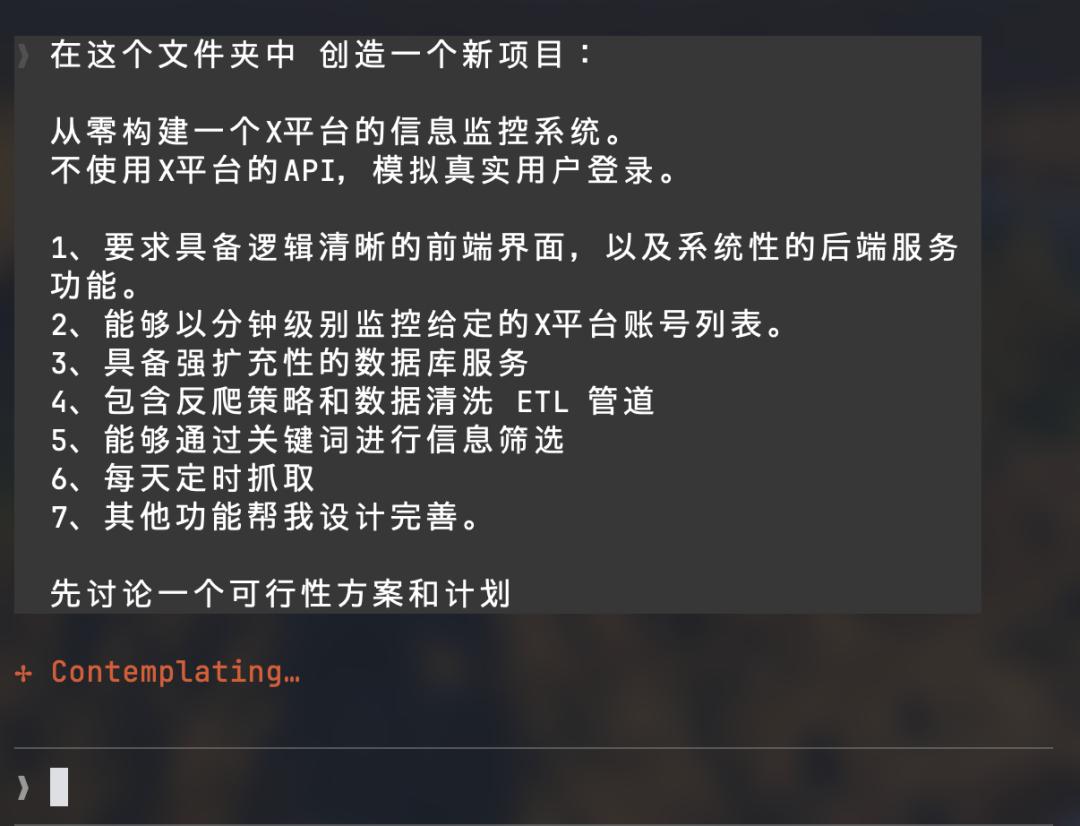

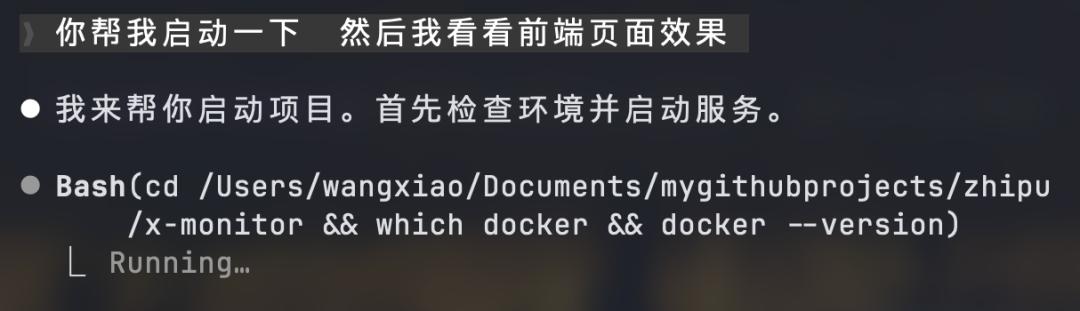

25-Minute Continuous Operation: True Agentic Coding

To further test GLM-5’s capabilities, we asked it to simulate a real user and create a monitoring system for platform X without using APIs.

Result: 25 minutes, all in one go.

As seen, GLM-5 autonomously called various tool agents during operation, planned tasks, broke down steps, and corrected errors by consulting documentation.

This ability to maintain logical coherence over long periods is something previous open-source models could not imagine.

Once completed, a single command allowed GLM-5 to run the project automatically.

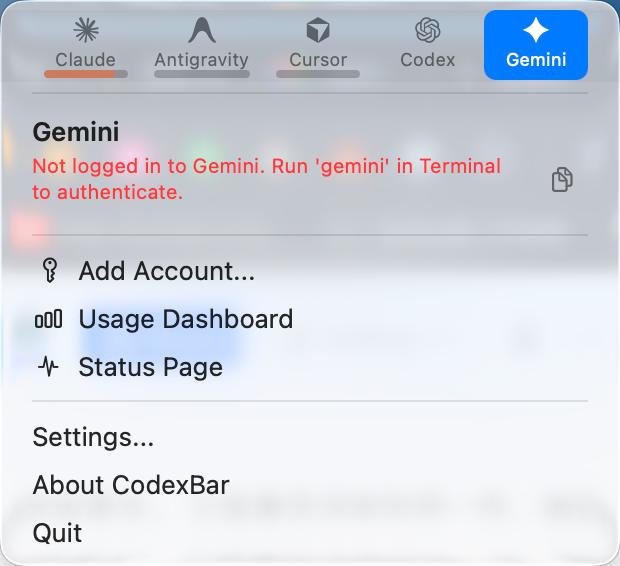

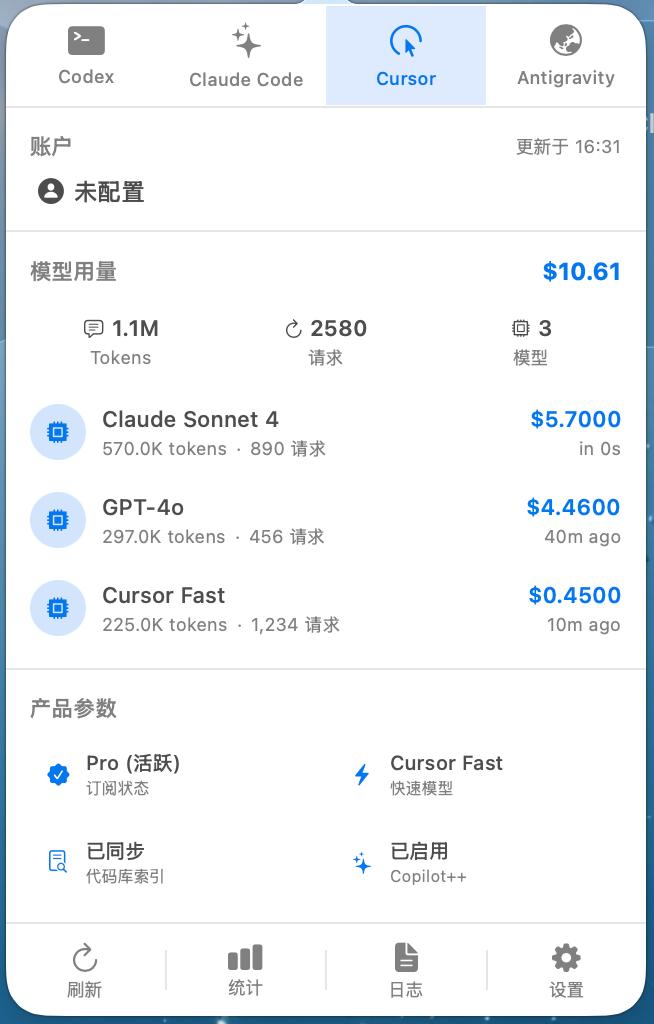

Visual Understanding: Creating an App from an Image

Finally, we fed GLM-5 a screenshot from an open-source project by the creator of OpenClaw (an AI quota statistics tool) and simply asked:

Create a MacOS App based on this.

Before long, it truly “replicated” a similar product.

Although the data was mocked, the UI layout and interaction logic were nearly perfectly replicated.

This showcases not only visual understanding but also the ability to translate visuals into SwiftUI code for engineering implementation.

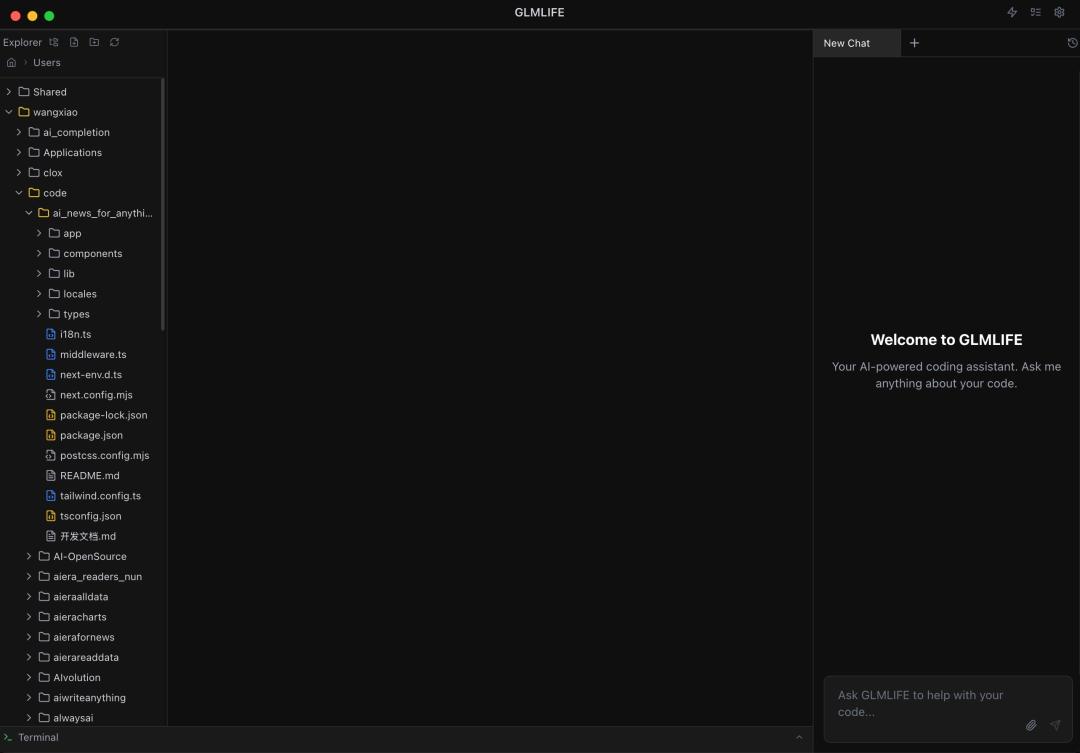

Expertly Crafted: One-Day Replication of “Basic Cursor”

To test GLM-5’s engineering limits, a seasoned developer decided to take on a big challenge:

Build an AI programming assistant with a desktop UI from scratch — GLMLIFE.

This is akin to creating a simplified version of Cursor.

Upon assigning the task to GLM-5, it did not rush to write code but instead produced a professional architecture design document (PLAN.md) and made mature technical selections:

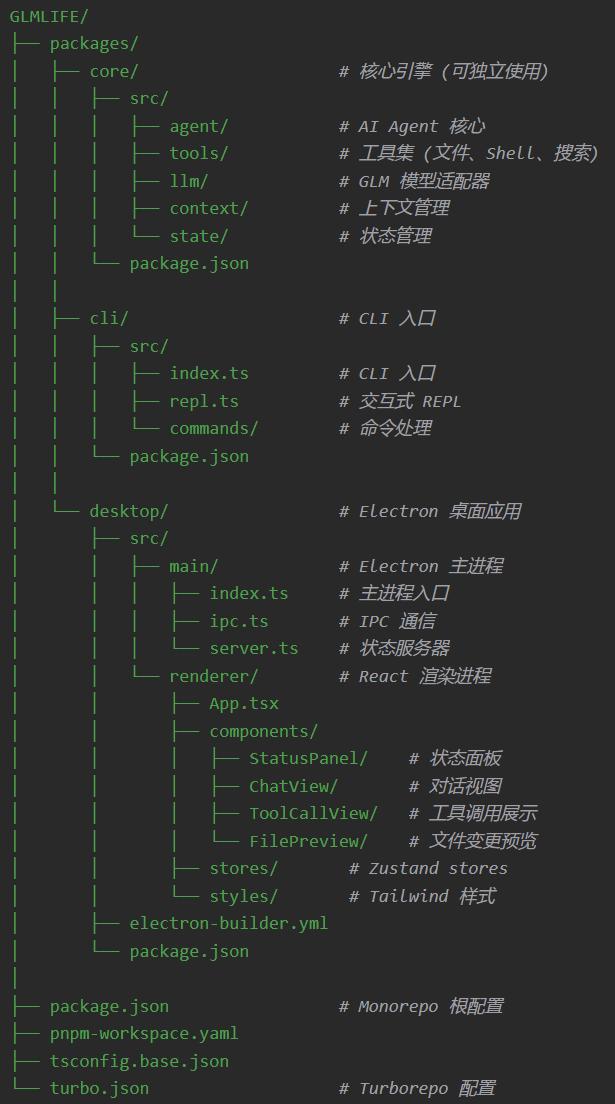

It directly adopted a Monorepo architecture, accurately breaking the project into three core packages:

- Core: Responsible for the core agent engine and LLM adaptation;

- CLI: Handles command-line interactions;

- Desktop: The main desktop application based on Electron + React 18.

From Zustand state management to Tailwind styling solutions, and complex IPC process communication, GLM-5 operated like a technical director with a decade of experience, clearly outlining the technical choices.

The developer initially expected to spend three days setting up the environment but completed the entire process from environment setup, core logic implementation, to Electron packaging in just one day.

Opening GLMLIFE was a moment of disbelief that this was an AI-created product in just one day.

GLMLIFE Mini Piano Implementation Process

Why Can It Become the “Opus of Open Source”?

Globally, Claude Opus 4.6 and GPT-5.3-Codex are highly sought after due to their strong “architectural” capabilities.

- Opus 4.6’s Violent Aesthetics: 16 AI avatars autonomously divided tasks, taking two weeks to build a Rust compiler from scratch with 100,000 lines of code, passing 99% of GCC stress tests.

- GPT-5.3’s Self-Creation: It is the first model from OpenAI to “participate in its own creation,” intervening in the training process and cluster deployment before its birth.

However, all of this has a fatal premise: they are not only closed-source but also expensive.

At this moment, the release of GLM-5 represents a violent breakthrough for China’s open-source large models into the Agentic era.

It directly targets the area that closed-source giants are most reluctant to let go of — system-level engineering capabilities, making a “substitute-style” offensive.

1. New Backend Architect

The Zhipu team is well aware that the open-source field lacks models that can handle dirty, tedious, and large-scale tasks. GLM-5 significantly enhances its training on backend architecture design, complex algorithm implementation, and stubborn bug fixing, while also achieving a strong self-reflection mechanism.

When a compilation fails, it analyzes logs, identifies root causes, modifies code, and recompiles until the system runs smoothly, just like a seasoned engineer.

2. If It’s Work, It Needs Accounting

With performance comparable to Opus and the advantages of being open-source, GLM-5 has, to some extent, shaken the walled gardens built by Anthropic and OpenAI.

- Local Deployment: It can run in completely isolated intranets and can be fine-tuned for private company frameworks, becoming the most knowledgeable specialist for its own code.

- Cost Control: Users can run a powerful Coding Agent on consumer-grade GPU clusters without worrying about costs for each test run.

Setting SOTA Records

GLM-5’s evolution can only be described in two words: violent.

As a foundational model aimed at complex system engineering design, its scale is certainly maximized.

Parameter count jumped from 355B (activated 32B) to 744B (activated 40B), and pre-training data increased from 23T to 28.5T.

Besides being “large,” it also needs to be “efficient.”

It is well-known that running agents consumes the most tokens.

To address this pain point, GLM-5 integrates the DeepSeek Sparse Attention mechanism for the first time.

This allows it to maintain “lossless” memory while significantly reducing deployment costs when handling ultra-long contexts.

Additionally, there’s a more aggressive “black technology” — the new asynchronous reinforcement learning framework Slime.

Combined with large-scale reinforcement learning, it transforms the model from a “one-time tool” into a “long-distance runner” that becomes smarter over time.

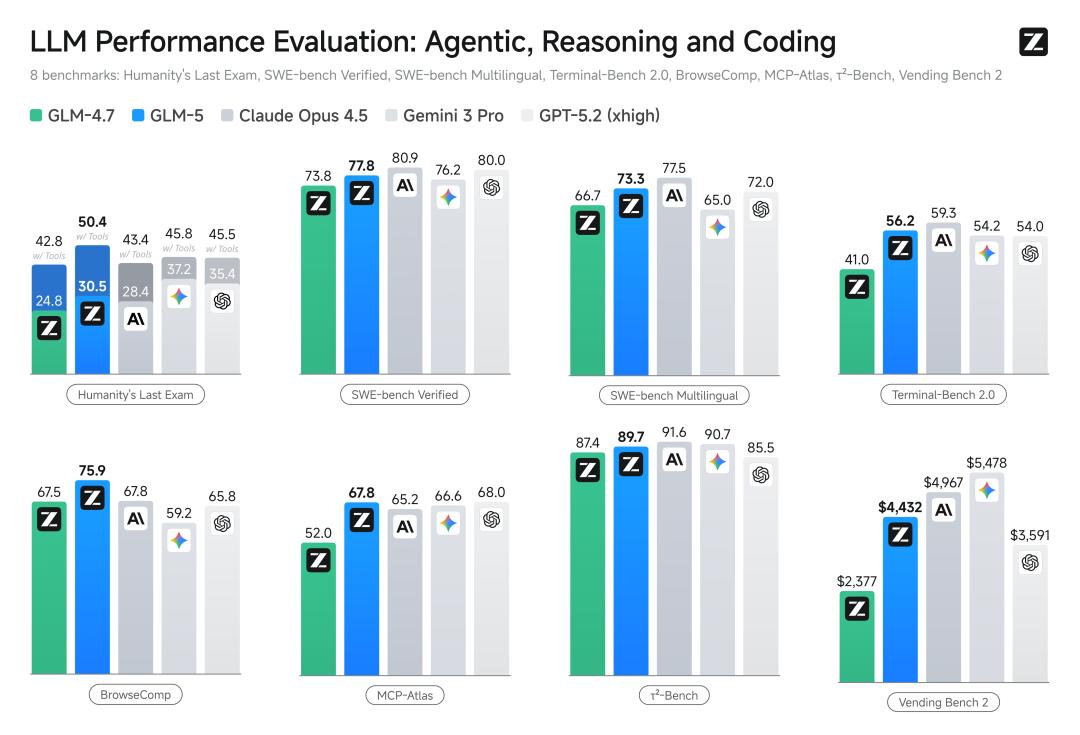

As for performance metrics, they are hardcore:

- Coding Ability

SWE-bench Verified scored 77.8, and Terminal Bench 2.0 achieved 56.2, both ranking first in open-source. This score not only surpassed Gemini 3.0 Pro but also closely matched Claude Opus 4.5.

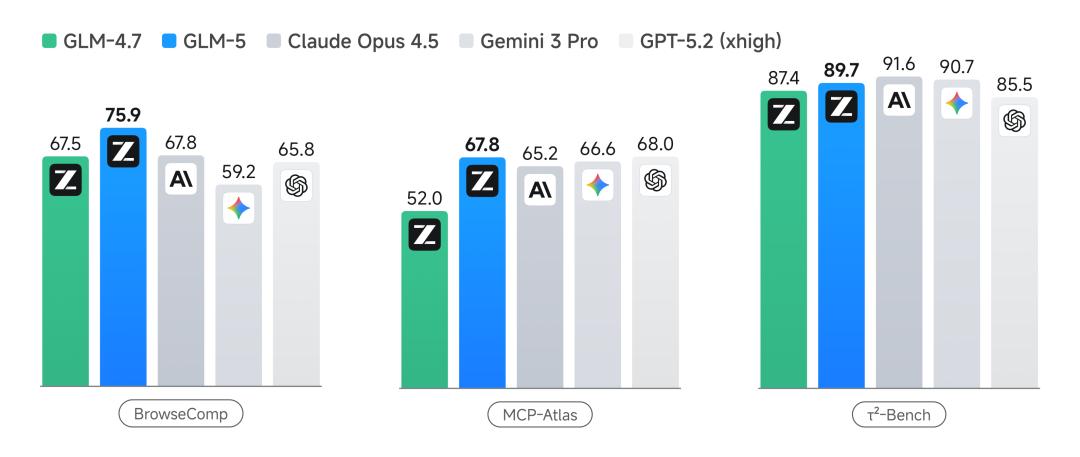

- Agent Capabilities

BrowseComp (online retrieval), MCP-Atlas (tool invocation), and τ²-Bench (complex planning) all ranked first in open-source.

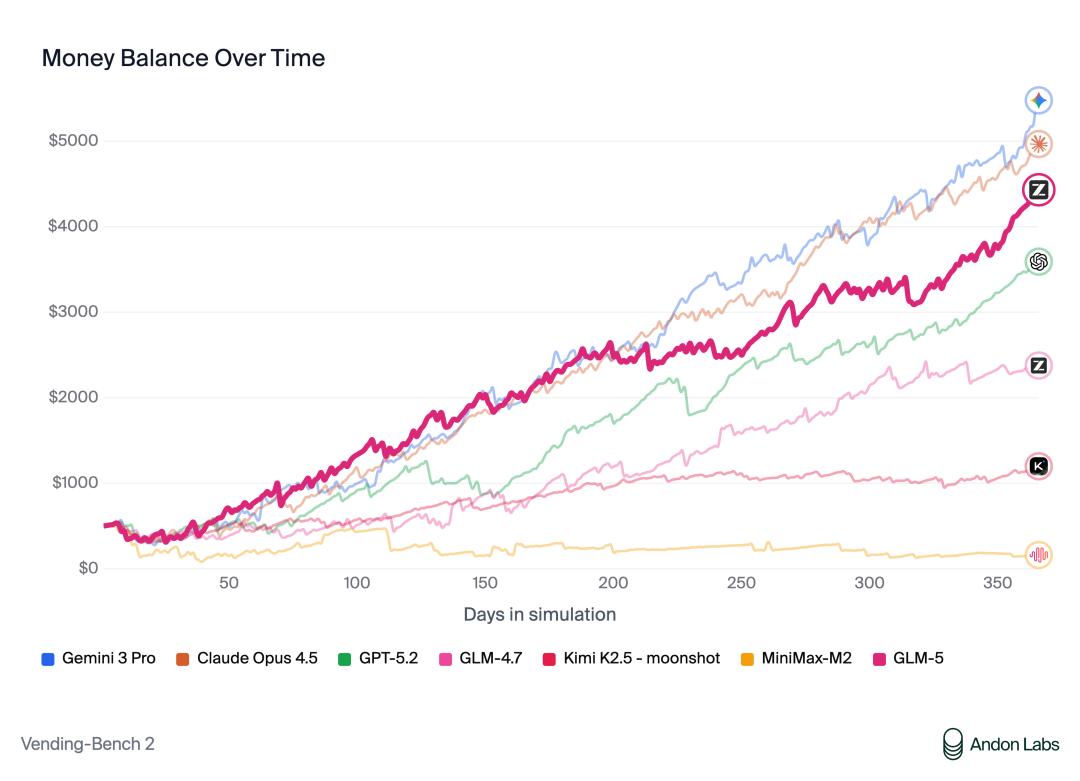

Interestingly, in the Vending Bench 2 (vending machine operation test), the model had to manage a vending machine for a year entirely on its own. Guess what? By the end of the year, GLM-5 had made $4,432, nearly catching up to Opus 4.5.

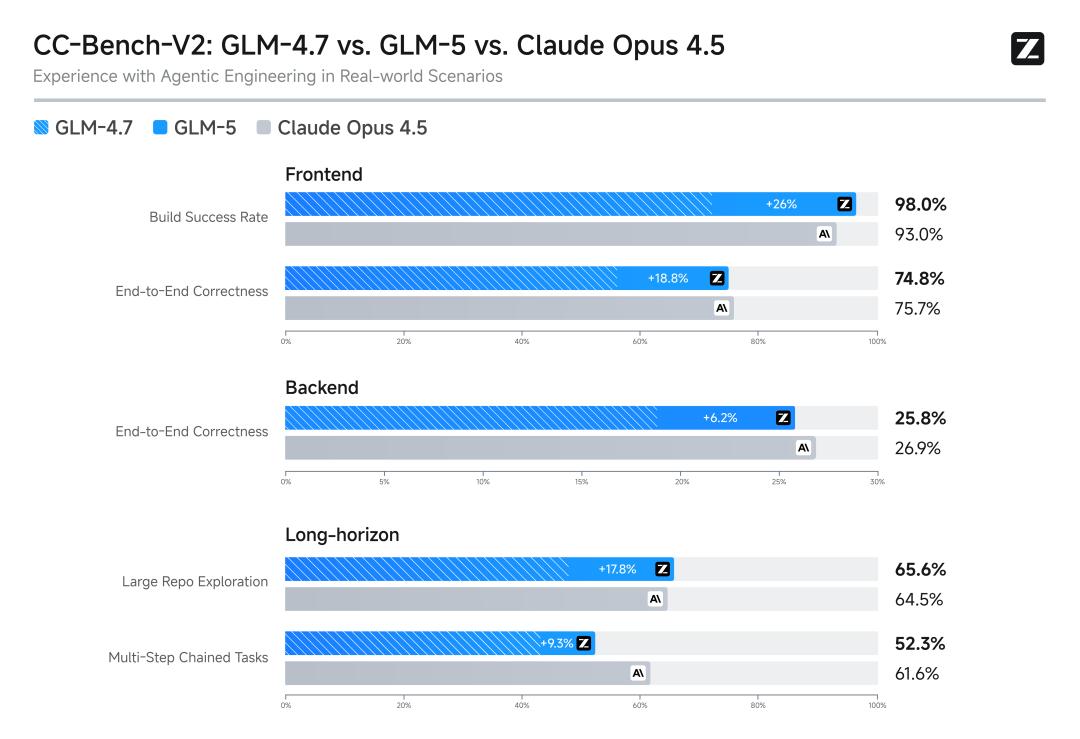

In the internal evaluations that developers care about the most, GLM-5 significantly surpassed the previous generation GLM-4.7 (with an average increase of over 20%) in front-end, back-end, and long-term task programming development tasks.

The real user experience is already approaching Opus 4.5.

AI Creating AI

Of course, GLM-5’s ambitions extend beyond just the model; it aims to reconstruct the programming tools we have at hand.

The globally popular OpenClaw has shown us the potential of AI operating computers.

This time, Zhipu also launched the AutoGLM version of OpenClaw.

In the original version, just setting up the environment required a lot of effort, but now it can be deployed with a single click on the official website.

Want a “digital intern” that monitors Twitter, organizes information, and even writes scripts for you 24/7? Just click, and it’s done.

Also released is Z Code —

A new generation of development tools entirely based on GLM-5 capabilities.

With Z Code, you just need to provide requirements, and the model will automatically break down tasks, even launching a bunch of agents to work concurrently: writing code, running commands, debugging, previewing, and even handling Git submissions.

You can even remotely command the desktop agents to work from your phone.

It’s worth mentioning that just as OpenAI used Codex to create Codex, Z Code itself was also developed with full participation from the GLM model.

The Victory of Domestic Computing Power

Behind the global traffic explosion and surging demand for agents driven by GLM, a group of “unsung heroes” is quietly supporting the massive computational load.

To ensure that every line of code and every agent plan can be output stably, GLM-5 has deeply integrated with domestic computing power, completing in-depth adaptations with mainstream platforms like Huawei Ascend, Moore Threads, Cambricon, Kunlun Core, Muxi, Suiruan, and Haiguang.

Through fine-tuned optimizations at the operator level, GLM-5 can achieve “high throughput and low latency” performance even on domestic chip clusters.

This means we not only have a top-tier model but also one that is not constrained.

Conclusion

In the spring of 2026, programming large models have finally shed their immaturity.

Karpathy’s so-called “Agentic Engineering” essentially imposes a more stringent “interview requirement” on AI:

- Previously (Vibe Coding): As long as you could write beautiful HTML, you were hired.

- Now (Agentic Coding): You need to understand the Linux kernel, the interconnections between 500 microservices, how to refactor code without crashing the online system, and be able to plan tasks and fix bugs independently.

GLM-5 is not perfect.

But in the core proposition of “building complex systems,” it is currently the only player in the open-source field capable of catching this wave of “Agentic”.

Vibe Coding is over.

Stop asking AI, “Can you help me write a webpage?” That was a question for 2025.

Now, try asking it: “Can you help me refactor the core module of this high-concurrency system?”

GLM-5, Ready to Build!

Easter Egg

GLM-5 has been included in the Max user package, and Pro will soon support it within 5 days!

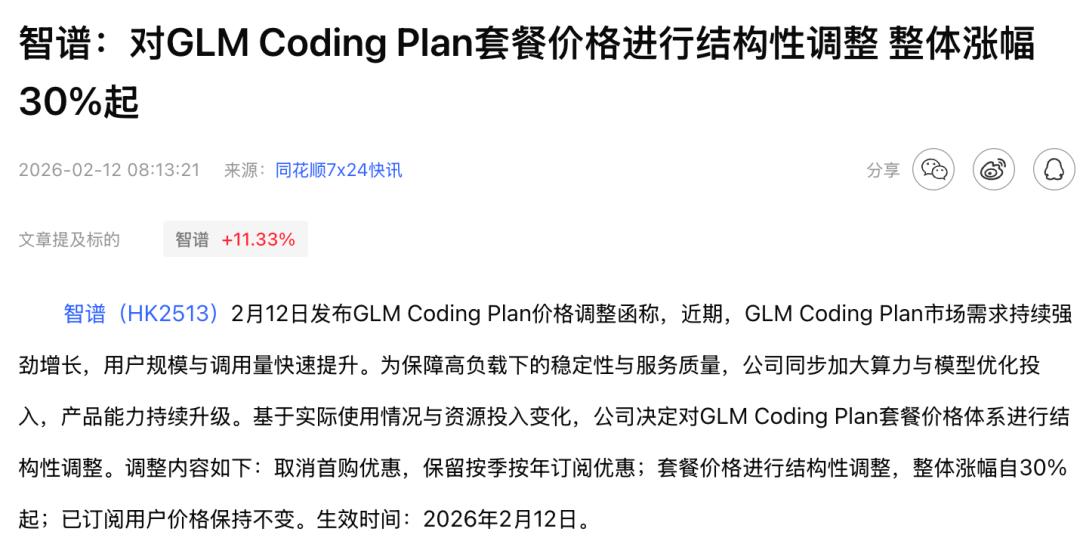

Additionally, Zhipu has just announced a price increase; this year’s tokens are bound to go up!

Hurry up and experience it!

Official API Access

· BigModel Open Platform:

https://docs.bigmodel.cn/cn/guide/models/text/glm-5

· Z.ai:

https://docs.z.ai/guides/llm/glm-5

· OpenClaw Access Documentation:

https://docs.bigmodel.cn/cn/coding-plan/tool/openclaw

Open Source Links

· GitHub:

https://github.com/zai-org/GLM-5

· Hugging Face:

https://huggingface.co/zai-org/GLM-5

· ModelScope:

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.