Introduction

Today, Agentic AI engineers discovered that a research task requiring 80 hours for a PhD can be completed by Codex in less than 2 hours, achieving a staggering 40-fold efficiency increase! According to previous standards, AGI has already existed; the entire industry has simply been moving the goalposts.

The “singularity” in the research community is indeed closer than everyone anticipated.

Recently, an experiment involving Codex’s Goal Mode shocked the academic world: Codex can increase AI research efficiency by 40 times!

Agentic AI engineer Dan McAteer recently disclosed an experiment on X, using OpenAI Codex’s Goal Mode to run a mechanistic interpretability research task.

GPT-5.5 estimated that a PhD student would take about 80 hours to complete this task, but in practice, the AI finished it in just 1 hour and 56 minutes.

GPT-5.5 estimated that a PhD student would take about 80 hours to complete this task, but in practice, the AI finished it in just 1 hour and 56 minutes.

This represents an apparent efficiency boost of about 40 times!

This represents an apparent efficiency boost of about 40 times!

The built-in skill used in Codex is /goal.

The built-in skill used in Codex is /goal.

The author believes:

/goal + gpt-5.5 high precision + fast mode is the most efficient AI agent configuration today.

This means allowing the model to set its own goals, where the key is that the prompts it generates are likely better than yours.

This is no longer just a simple “efficiency improvement”; it is a complete “dimensionality reduction attack.”

This is no longer just a simple “efficiency improvement”; it is a complete “dimensionality reduction attack.”

As research cycles shrink from weeks to hours, and AI begins to autonomously draft its own experimental goals (/goal), we must confront a harsh reality:

The slope of the “intelligence explosion” has already emerged, and the speed of AI’s self-iteration is departing from human control!

What is Codex /goal Mode?

Let’s take a look at how this experiment was conducted.

The experiment was initiated by Dan McAteer, an Agentic AI engineer and former Amp Code engineer, who frequently shares practical experiences of AI agent engineering on X.

His experimental setup was simple:

His experimental setup was simple:

- Tool: OpenAI Codex /goal command

- Model: GPT-5.5 high

- Mode: fast mode

- Task: A research task in the direction of Mechanistic Interpretability

He describes this configuration as the most efficient AI agent configuration currently available.

Why is Codex /goal Important?

What truly deserves attention is the /goal mode itself.

According to OpenAI Codex engineer Philip Corey, /goal is our implementation of the Ralph loop—allowing goals to persist across multiple dialogues, not stopping until achieved.

According to OpenAI Codex engineer Philip Corey, /goal is our implementation of the Ralph loop—allowing goals to persist across multiple dialogues, not stopping until achieved.

In simple terms, a standard Codex call is you say a sentence, it takes one step, and responds. Codex /goal allows you to state a goal, and it autonomously breaks down sub-tasks, executes them, reviews results, and continues until it either succeeds or fails.

This represents a shift from conversational AI to goal-driven AI.

For research tasks like Mechanistic Interpretability, the /goal mode is naturally well-suited.

For research tasks like Mechanistic Interpretability, the /goal mode is naturally well-suited.

The research process itself involves proposing hypotheses, designing experiments, running them, observing results, refining hypotheses, and re-experimenting—a perfect loop for a self-cycling agent.

McAteer’s experiment truly demonstrates the usability of the Codex /goal mode in cyclical research tasks: it does not replace researchers but rather replaces the repetitive operations performed by researchers.

If this capability can stabilize, it will have a very direct leverage on AI research itself.

If this capability can stabilize, it will have a very direct leverage on AI research itself.

It means that AI researchers within AI labs could one day use AI agents for repetitive tasks such as preparing training data, setting up experiments, conducting ablation studies, generating visualizations, and preliminary result analysis.

This aligns with what Anthropic and OpenAI have repeatedly stated: AI is accelerating AI research itself.

PhD 80 Hours vs AI 2 Hours

In the traditional research context, a PhD student’s daily routine involves reviewing literature, building models, debugging code, validating results, and writing reports.

This lengthy process is due to the physical limits of the human brain when processing complex logic and vast amounts of data.

However, Codex’s recent experiment completely shatters this perception.

Under the strongest agent configuration of /goal + GPT-5.5 High + Fast Mode, AI is no longer a tool that “follows commands” but an independent researcher that “generates strategies.”

Under the strongest agent configuration of /goal + GPT-5.5 High + Fast Mode, AI is no longer a tool that “follows commands” but an independent researcher that “generates strategies.”

It can understand complex natural language auto-encoder (NLA) experimental requirements, autonomously decompose tasks, and complete in less than 2 hours what human elites would take two weeks to accomplish.

This signifies that the threshold for human research has completely collapsed. The professional analytical capabilities that once required years of study are now being modularized by algorithms.

Moreover, autonomous AI researchers have already arrived ahead of schedule!

OpenAI previously set a goal for achieving autonomous AI research by the end of 2026. However, based on current experimental progress, 2026 may not be the beginning but rather the endpoint where humanity completely hands over the research baton.

Evidence of Recursive Self-Improvement Emerging

If Codex’s 40x speed experiment is a glaring case, what is even more unsettling is the growing evidence surrounding “recursive self-improvement.”

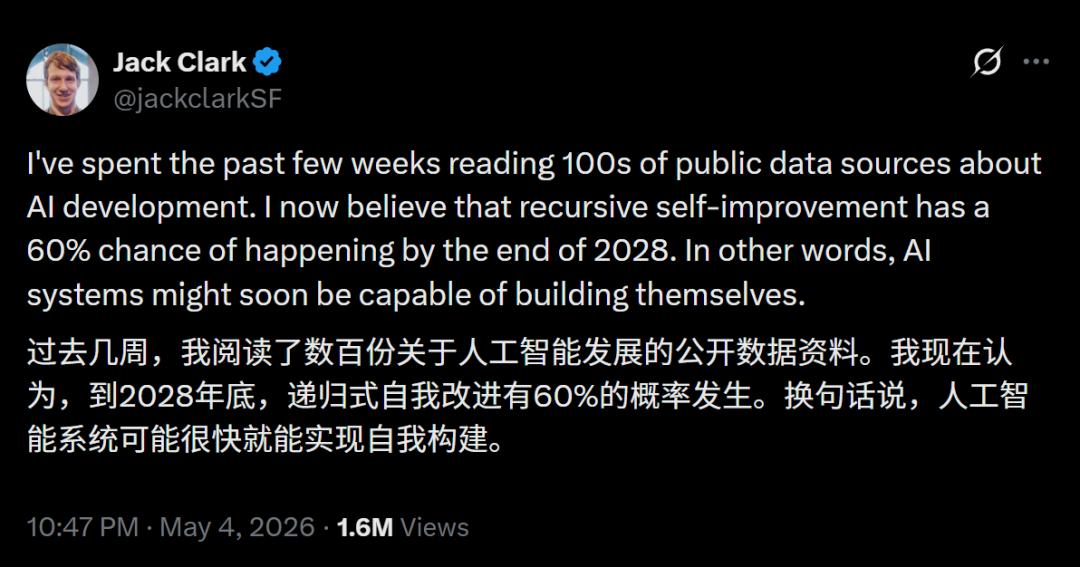

On May 7, Axios reported that Anthropic co-founder Jack Clark publicly provided a probability:

By the end of 2028, the probability of AI achieving complete recursive self-improvement exceeds 60%.

Sakana AI and UBC’s research team this year developed the Darwin Gödel Machine, a programming agent capable of rewriting its own source code to enhance its capabilities.

Sakana AI and UBC’s research team this year developed the Darwin Gödel Machine, a programming agent capable of rewriting its own source code to enhance its capabilities.

In SWE-bench, its score improved from 20.0% to 50.0% without any human intervention.

In SWE-bench, its score improved from 20.0% to 50.0% without any human intervention.

The same team’s AI Scientist project was published in Nature in March this year.

The same team’s AI Scientist project was published in Nature in March this year.

It can independently generate research ideas, write code, run experiments, draft complete papers, and conduct peer reviews.

A complete research pipeline, from start to finish, is accomplished independently by AI.

Now, let’s look at a set of hard data. GPQA Diamond, a scientific question-answering benchmark set by PhD experts, saw GPT-4 score 39% in November 2023, while the average score of human domain experts was about 65%.

Now, let’s look at a set of hard data. GPQA Diamond, a scientific question-answering benchmark set by PhD experts, saw GPT-4 score 39% in November 2023, while the average score of human domain experts was about 65%.

By April 2026, cutting-edge models collectively surpassed the threshold: Gemini 3.1 Pro scored 94.3%, while Claude Opus 4.7 scored 94.2%.

All cutting-edge models have far outpaced human PhD experts.

The trajectory of SWE-bench further illustrates the acceleration.

The trajectory of SWE-bench further illustrates the acceleration.

At the end of 2023, Claude 2’s pass rate was 2%. Now, it stands at 93.9%.

At the end of 2023, Claude 2’s pass rate was 2%. Now, it stands at 93.9%.

In just two and a half years, it skyrocketed from 2% to 93.9%.

This curve, once drawn, is recognizable to anyone who has studied high school mathematics.

Clearly, the process of recursive self-improvement (RSI) has already begun.

Once AI starts rewriting its underlying code and optimizing its architecture at this 40x efficiency, the growth of intelligence will no longer be linear but vertical.

AGI Has Been Delivered, and the Entire Industry is Gaslighting You

In fact, as early as February this year, four scholars from different top fields jointly published a paper that can be described as the “most unsettling of the year”: “AGI Case Study: Today’s LLMs Have Met the Criteria.”

The four authors represent the four pillars of contemporary intelligence: philosophy, machine learning, linguistics, and cognitive science. They reached a chilling consensus:

The four authors represent the four pillars of contemporary intelligence: philosophy, machine learning, linguistics, and cognitive science. They reached a chilling consensus:

According to definitions prior to 2022, AGI has already been achieved.

The reason no one acknowledges it now is that the entire AI industry is engaging in a collective “gaslighting effect” against the public.

The paper pointed out that humans exhibit a strong “psychological defense mechanism” when faced with the rise of AI.

Before 2022, as long as a model could pass the Turing test and handle tasks across domains, it was considered AGI.

Before 2022, as long as a model could pass the Turing test and handle tasks across domains, it was considered AGI.

With the emergence of ChatGPT: “Just having these capabilities is not enough; it must also have perfect reasoning, embodiment, and self-awareness.”

Each time a model breaks through a barrier, humans spontaneously add new, elusive criteria as thresholds, continuously moving the goalposts.

The problem is, if AGI already exists, the current industry logic becomes extremely absurd.

OpenAI is still raising $40 billion claiming to “build AGI”; Anthropic packages each new model release as a futures contract “close to AGI.”

The paper sharply reveals that the giants are disguising something that has already been “sold to you” as a miraculous achievement “soon to be developed” to secure a continuous flow of funding and power.

The Eve of the Intelligence Explosion

Today, we find ourselves at an extremely strange juncture.

In laboratories, AI is already conducting mechanistic interpretability research at 40 times the speed, even helping itself write code.

In the market, computing power remains a hard currency, with Nvidia’s Blackwell chips being snatched up, each chip accelerating the arrival of that singularity.

However, in social psychology, the public is still using outdated terms like “repeater” and “probability prediction” to comfort themselves.

If 40 times the research efficiency becomes the norm, the accumulated knowledge of human civilization over thousands of years could be doubled by AI in just a few months.

When AI can independently complete PhD-level tasks, our existing education systems, title evaluations, and even the very meaning of the term “expert” will face existential threats.

Just as Copernicus removed Earth from the center of the universe, AI is now displacing humanity from the sanctum of being the “only intelligent life.”

Now, this war called the intelligence explosion is happening without gunpowder.

We must either learn to coexist with this new intelligent species or watch helplessly as it leaves us in the dust at 40 times the speed.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.