Introduction

The evolution of artificial intelligence raises the question: will it eventually replace humans?

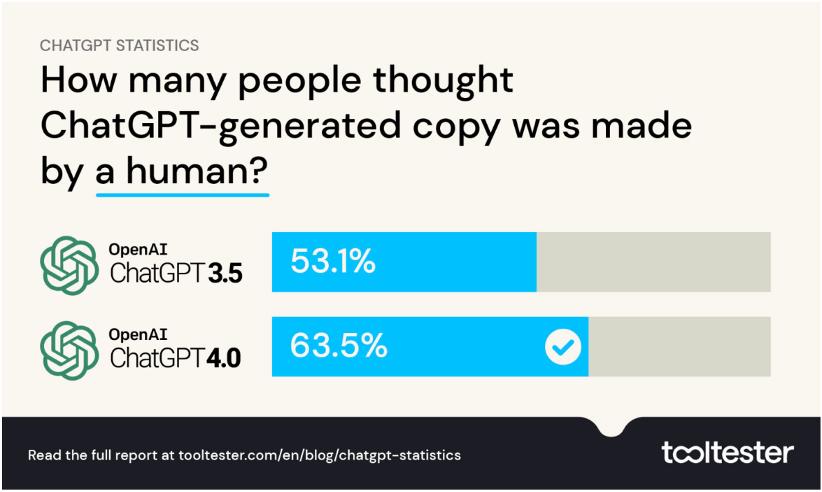

Recently, a statistical website, Tooltester, released a survey report on the usage of ChatGPT. The survey was conducted in two rounds, with the first round taking place in late February 2023, involving 1,920 American users of ChatGPT. Participants were presented with 75 segments of text—some written by humans, some by machines, and some by machines with human editing—and were asked to identify the sources. After the release of GPT-4, an additional 1,394 participants were surveyed. The results indicate that the speed of iteration for chatbots has exceeded public expectations, and most users have limited understanding of the complexity of machine-generated content, making it difficult to distinguish which online content is human-written.

Key Findings

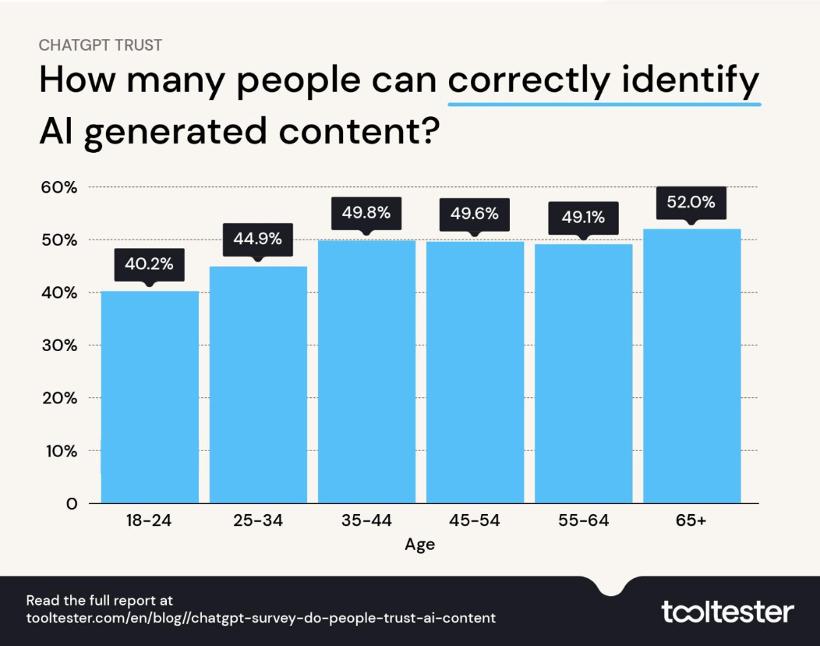

In terms of content recognition, over 53.1% of participants could not accurately identify the machine-written paragraphs. This percentage rose to 63.5% after the launch of GPT-4. Even among those more familiar with AI tools, only 48% could make correct judgments. Young people aged 18-24 were particularly susceptible to being misled by machine writing; 59.8% of them could not make accurate distinctions, despite their frequent exposure to such content. Interestingly, older adults aged 65 and above had the highest accuracy rate in identifying machine writing at 52%.

Content Domains

Are machines better at writing on specific topics? The results show that AI chatbots excel in generating content related to health and travel. Participants were more likely to mistakenly attribute AI-generated articles on topics like the side effects of paracetamol, fitness plans, car rental tips, and hotel savings strategies to human authors. This suggests that AI-generated health and travel tips may seem more human-like than those written by actual humans.

Conversely, technical articles were easier to identify, with 51% of participants able to distinguish which ’tech posts’ were AI-generated. In this area, female participants performed slightly better than males (52.4% vs. 49.9%). The survey team warns that this trend may indicate a dangerous shift towards a future where AI is deeply integrated into everyday life, including healthcare.

Trust and Regulation

The survey also gauged participants’ perceptions and trust in machine-generated content. Over 80% supported the establishment of regulations for machine writing. Furthermore, 71.3% stated that if content providers, such as businesses or publishers, released AI-generated content without disclosure, it would significantly reduce their trust in the brand. Overall, people prefer content providers to actively disclose the methods of content production, though whether this will become the norm in the future internet landscape remains to be seen.

Since OpenAI launched ChatGPT on November 30, 2022, discussions around artificial intelligence have surged. This chatbot, capable of understanding context, learning human language for conversation, and completing tasks like email writing and topic creation, attracted millions of users within days. The release of GPT-4 in March has continued to challenge public perceptions. This survey report reaffirms a fundamental reality: we are indeed struggling to distinguish between human and machine writing.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.